Katy Perry 2025 Lifetimes Tour

Tools

Runway

Kling

Higgsfiled

ComfyUI

ControlNet

LoRA

Photoshop

Description

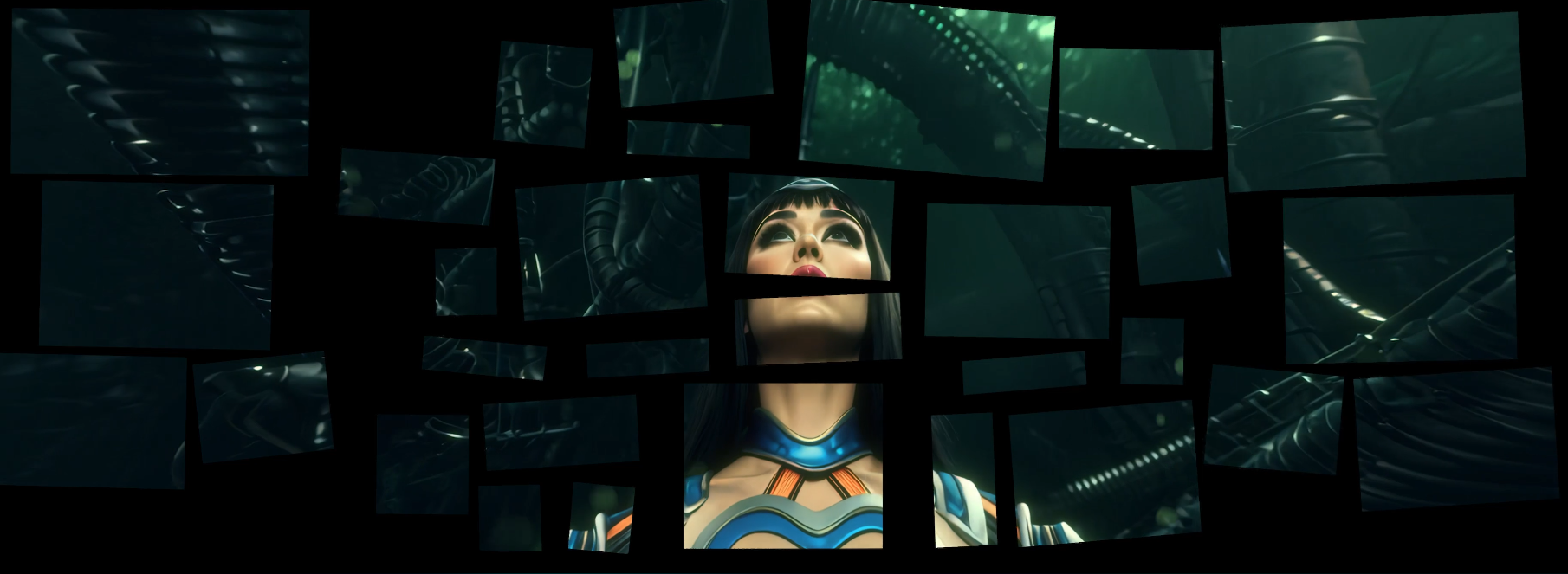

AI visual artist for six narrative sci-fi interludes in Katy Perry's Lifetimes Tour, built for a 30-screen stage system across a global run.

Seen by over 1 million fans globally (live + streaming), Live performances in 89 cities worldwide, localized across five continents

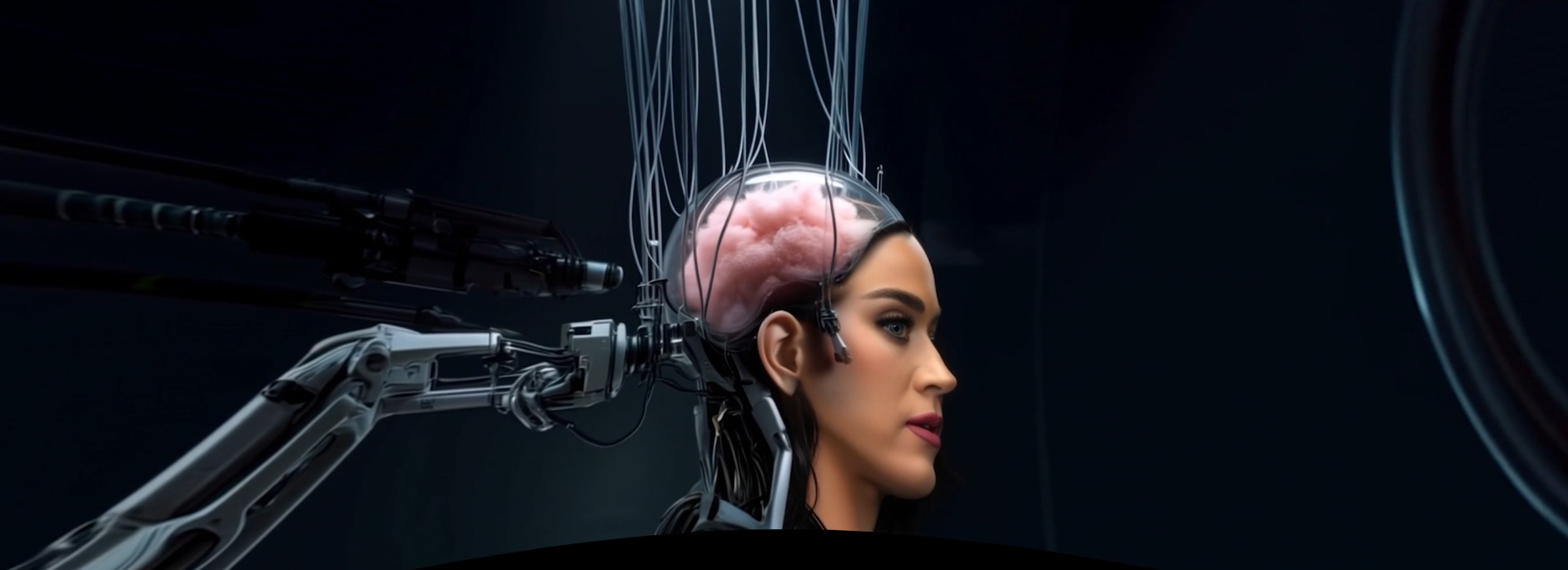

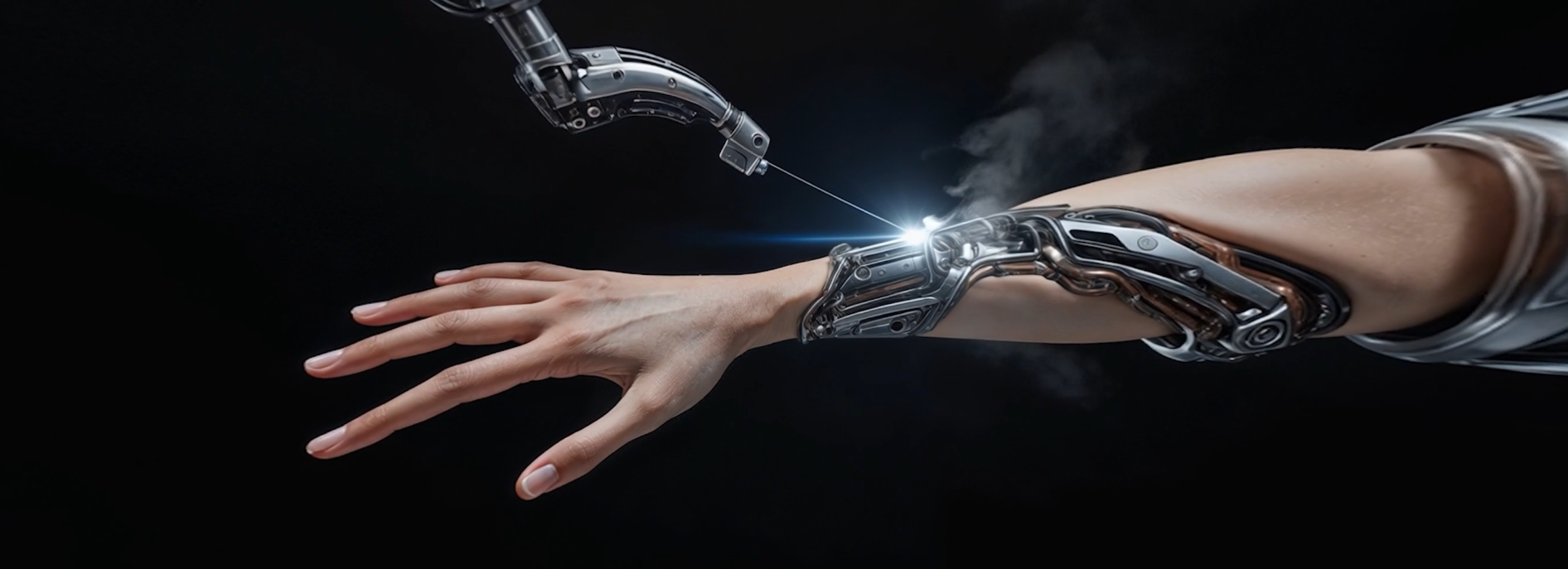

The Katy Perry Lifetimes Tour needed six interlude films to run as transition chapters across the show. They connect into a single story — Cyborg KP 143 vs AI mainframe — produced by a team of 4 AI artists, 1 creative art director, and 2 editors. This was early 2025, when most AI video tools were still in early stages and the workflows being used in production weren't fully figured out yet.

I was one of the four AI artists working on the shot breakdown. Tools in the pipeline included ComfyUI, Krea, Kling, Higgsfield, and Runway — each with different strengths and limitations at the time. Each day started with the art director breaking down the shot list, then shots got distributed and we worked through them independently. A big part of the image work happened in Photoshop — we weren't just regenerating until something worked; we'd pull the strongest elements from multiple generated variants and composite them together into one satisfying keyframe, then use that as the input for video generation. Final review was iterative with the art director until shots were approved.

Pipeline

Daily shot-list breakdown with the art director.

Per-artist shot assignment after breakdown.

Each artist runs end-to-end image-to-video production for assigned shots.

Iterative internal review and editor integration for interlude delivery.

What I built

Shot packages delivered across six interlude films.

Image-to-video outputs prepared for tour-stage projection workflows.

Consistency support for character identity and scene language across all interludes.

Character consistency and lipsync were the two things that look straightforward now but genuinely weren't solved in early 2025. For character consistency, we approached it from three directions: training a dedicated character LoRA on KP143, training a separate costume LoRA, and using a Krea community LoRA that had aggregated a large volume of tagged reference imagery — more training data than we could build ourselves. Running all three in combination gave us enough visual anchoring to keep the character readable across six chapters and four artists. For lipsync, the technology at the time wasn't reliable enough to work cleanly from raw outputs, so we had to do significant audio and image preprocessing upfront to give the lipsync tools a cleaner signal to work from.