'The Wizard of Oz' experience at Sphere

2025 / AI Inference Artist @magnopus

Leveraged Comfy UI and Google Veo2 to generate high-fidelity AI-driven keyframes and video clips, focusing on advanced prompt engineering and iterative visual refinement; collaborated with the VFX team to integrate assets into the compositing pipeline, ensuring seamless stylistic coherence.

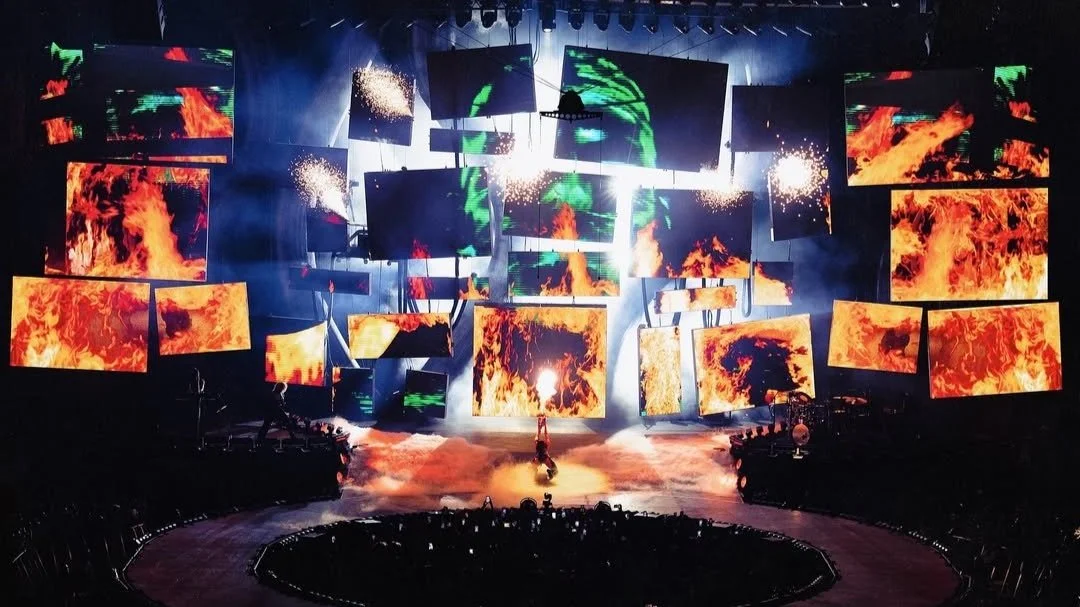

Katy Perry Lifetimes World Tour

2025 / AI visual creator @HFTF

Generated AI-driven keyframes and video clips for interlude content used in Katy Perry’s 2025 Lifetimes world tour. Utilized tools such as Comfy UI, Krea, Kling, Higgsfiled and Runway to produce cinematic visuals based on provided creative direction, contributing to a futuristic, cybernetic stage aesthetic. Focused on prompt engineering, iterative visual refinement, and asset delivery, ensuring alignment with the tour’s overall visual language.

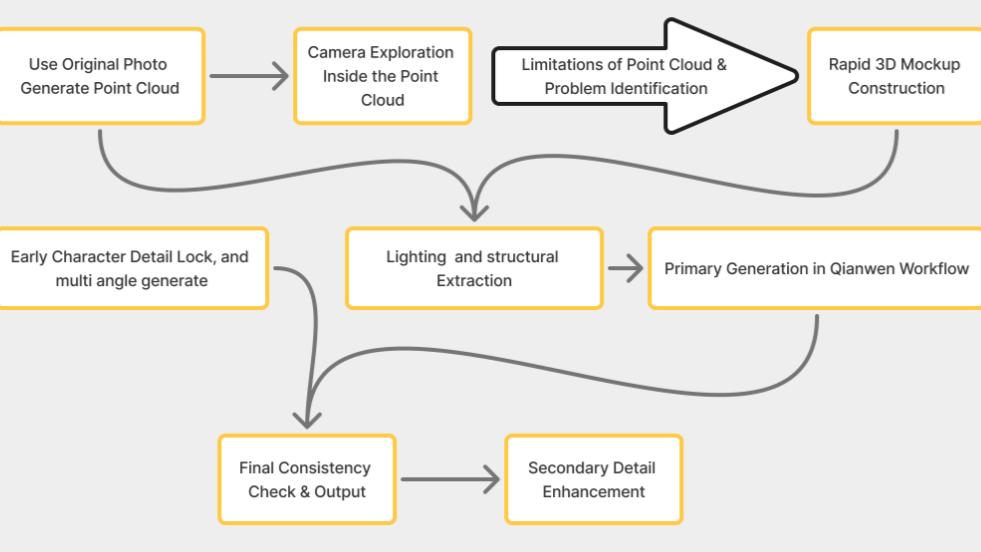

Generative Control Systems

2026 / AI R&D – Multi-View · 4K Wide Shot · Motion Control

This research investigates controllable generative pipelines across three production-critical challenges: generating consistent multi-view outputs from a single image using a 3D-assisted workflow (Gaussian Splatting + rapid mockup) to anchor camera, depth, and lighting logic; maintaining character recognizability in 4K establishing wide shots where subjects occupy minimal screen space through world/character separation and staged refinement; and benchmarking motion control strategies across mid-shot and close-up conditions to balance motion accuracy, detail stability, and lighting coherence via hybrid anchor-frame workflows.

Full technical breakdown available upon request.

Buddy GPT - Context-Aware Desktop Companion

2026 / Personal Project / AI Agent

BuddyGPT is a lightweight desktop companion that lives in the corner of your screen. When woken via hotkey or click, it captures context from your last active window (screenshot-based), then replies with short, practical, colleague-style answers—helping you get unstuck rather than acting as a “do-it-for-me” agent.

The project emphasizes interaction design (wake → capture → ask → reply → auto-rest), app-aware context handling, optional web lookup, and clear privacy boundaries between local operations and what’s sent to the model.